|

Hello! I am a machine learning researcher. I recently earned PhD in computer science at Seoul National University, advised by Hyun Oh Song. I was a visiting scholar at New York University in 2024, hosted by Kyunghyun Cho. Before that, I interned at NAVER AI in 2018, where I worked on speech enhancement. I completed BSc with Mathematics at Seoul National University in 2019. My studies were supported by the Korea Foundation for Advanced Studies (PhD) and Presidential Science Scholarship (BSc). I'll be joining Apple AI/ML Foundation models team (NYC) as a Research Scientist! |

|

|

Future AI systems will require long-term interaction, continual personalization, large-database inference, and streamed input processing. I believe that efficient and effective context management is the key to enabling these capabilities. My current research focuses on improving contextual memory in AI models, addressing challenges in attention mechanisms (Context Memory), KV caching (KVzip), and tokenization. Previously, I led ML research projects in synthetic data generation, dataset cleaning, causal discovery for human genes, and speech enhancement. |

|

|

|

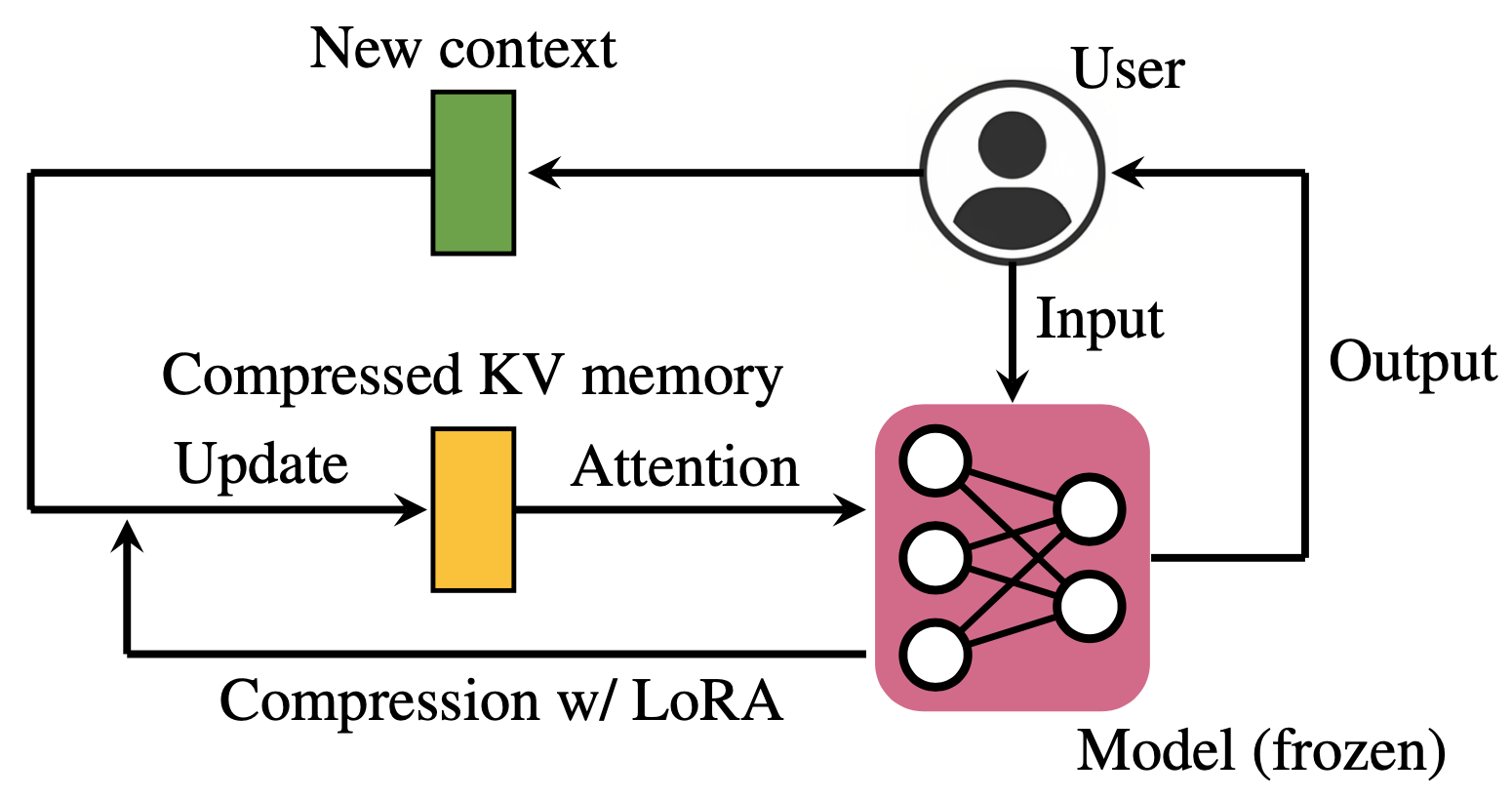

Jang-Hyun Kim, , , , , NeurIPS, 2025 - Oral Presentation (77/21575=0.35%) Paper | Code | Blog | Bibtex |

|

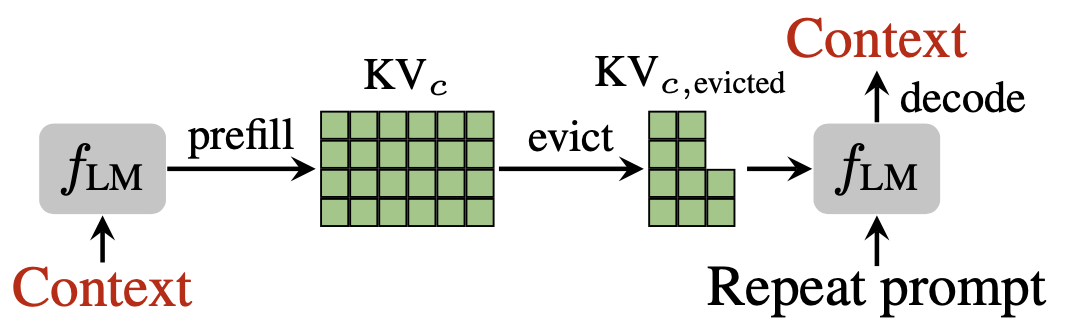

Jang-Hyun Kim, , , , TMLR, 2025 Paper | Code | LM podcast | Bibtex |

|

Jang-Hyun Kim, , *, * ICLR, 2024 Paper | Code | Project Page | Bibtex |

|

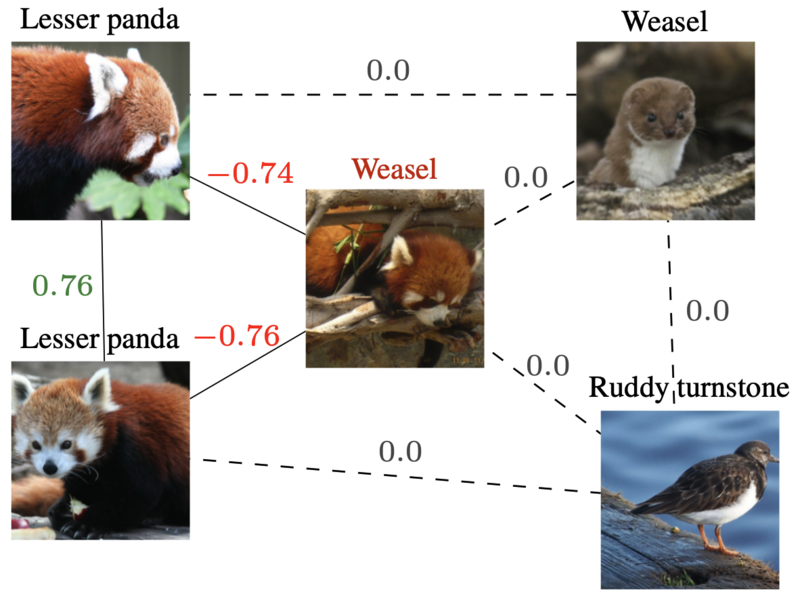

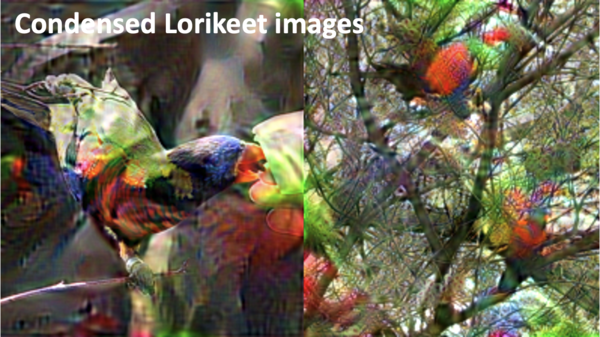

Jang-Hyun Kim, , NeurIPS, 2023 Paper | Code | Bibtex |

|

Jang-Hyun Kim, , , , , , , ICML, 2022 Paper | Code | Bibtex |

|

*, *, Jang-Hyun Kim, NeurIPS, 2021 Paper | Code | Bibtex |

|

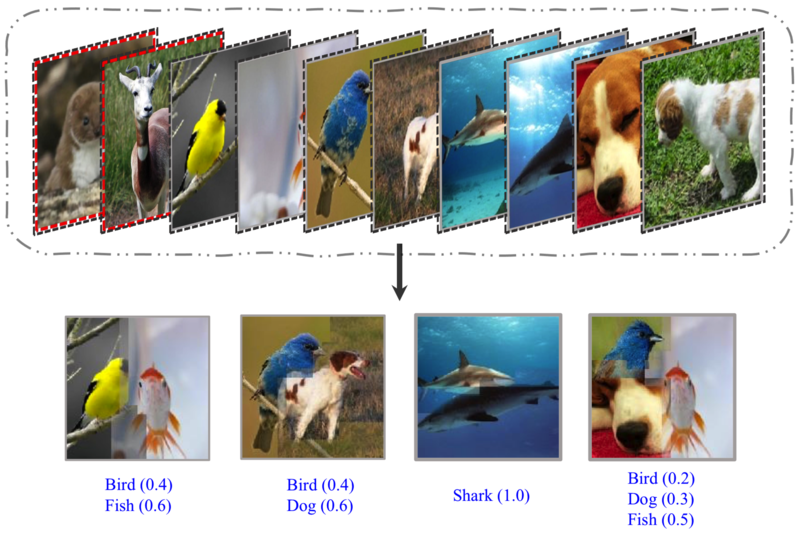

Jang-Hyun Kim, , , ICLR, 2021 - Oral Presentation (53/2997=1.7%) Paper | Code | Bibtex |

|

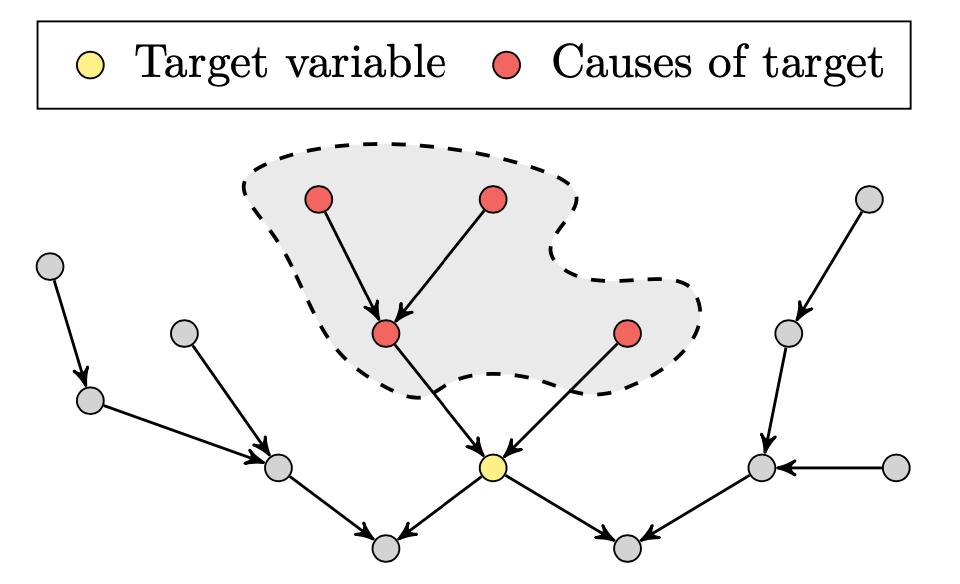

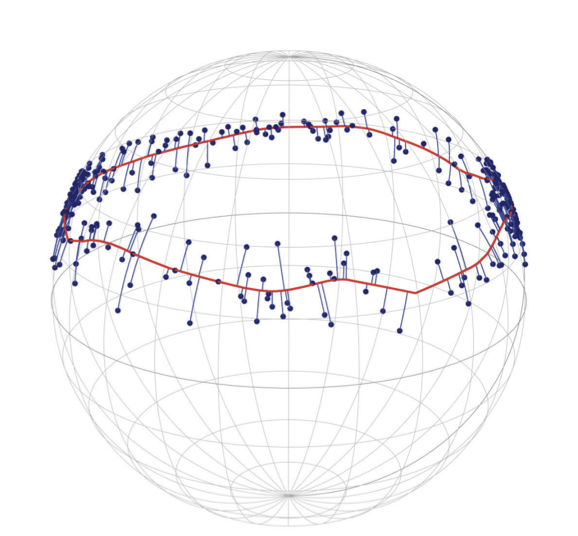

*, Jang-Hyun Kim*, TPAMI, 2021 | R Journal, 2022 Paper | R Journal | Code | Bibtex |

|

Jang-Hyun Kim, , ICML, 2020 Paper | Code | Bibtex |

|

, Jang-Hyun Kim, , , , arxiv, 2019 Paper | Bibtex |

|

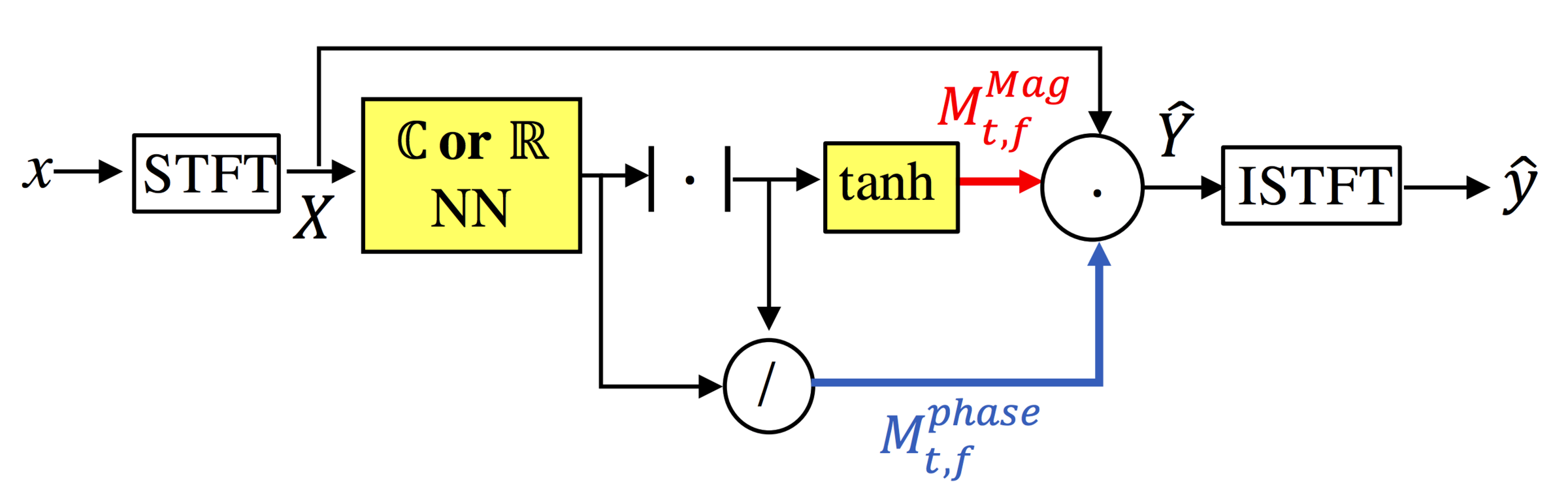

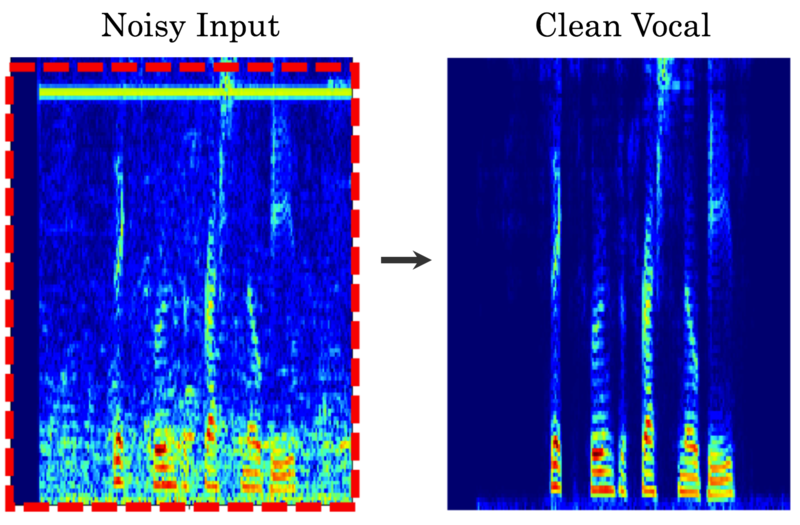

Jang-Hyun Kim*, *, , , arxiv, 2018 Paper | Code | Bibtex | Demo |

|

|

|

Code | Kaggle

|

|

Code |

|

Code |

|

|

|

|

Template based on Jon Barron's website. |